Fund firms are gorging on data and racking up unnecessary storage costs. Their data management strategies need to change, writes Nicholas Pratt.

A data warehouse was once a simple storage facility for static portfolio data. However, appetite for data has grown exponentially, as has the ability to produce it. Now there is so much more required of a data warehouse.

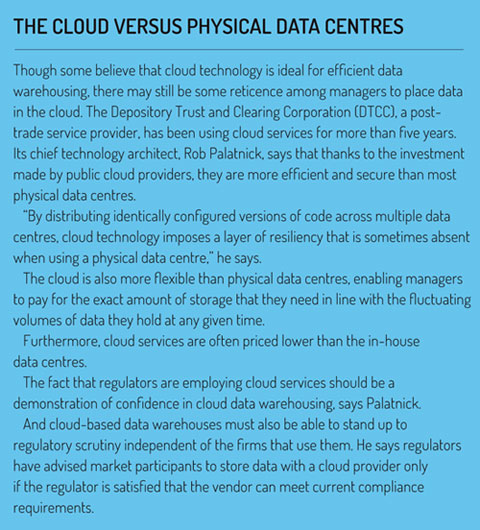

Fortunately, technology has developed to meet new requirements. ‘Virtualisation’ and cloud use have eliminated the capacity concerns of the late 1990s but it is at the application server level where new technology has created the ability to access data quickly and feed it directly into fund managers’ models and other systems.

The potential problem is human behaviour. The availability of data has led some managers to consume it to an excessive level, says Chris Grandi, chairman and chief executive of cloud-based data provider Abacus Group. “Firms are bingeing on data right now and I don’t see that changing. Data is increasingly available and the market is increasingly competitive, and data is seen as the key to competing.”

And while there is better technology behind data warehouses, managers could still be incurring needless costs if they are not efficiently and proactively managing their data consumption and storage, says Grandi. “If you are not using that data, it is just taking up space and costing you money.”

To prevent this happening, firms need to create a data-processing strategy with a dedicated data manager or department, with the right level of communication between the portfolio managers requesting the data and the technical experts pulling that data out of the warehouse, says Grandi.

“If that communication is not clear or if the workflow is not correct, it can be an expensive process. Some clients spend more than $1 million or more to get their data warehouse correct.”

Very few firms will now look to develop their own data warehouse, he says. Instead, they will look to third-party vendors like MIK or Indus Valley Partners and Lux Fund Technology.

Other providers include custodians and administrators that are looking for new revenue streams and to exploit all the fund-related data they already hold.

“Asset managers are telling us that they need help,” says Sid Newby, who works in UK business development custody bank and fund administrator BNP Paribas Securities Services.

“They have grown through M&A and ended up with multiple front-office systems in different geographies and asset classes and they need to bring all of that data together. Commercial pressures, client demands and regulatory burden mean that the time has come to confront this challenge.”

The data warehouse has to be seen as part of the wider data management challenge that involves not just data storage but data capture, cleansing, distribution and general management, says Newby, and it is an area that custodians and asset servicers are keen to exploit.

At the other end of the market are providers of trading systems. These systems have historically dealt only with transient data that is stored elsewhere and were primarily built for speed – like a racing car, they carried only the essential features or functions.

But those days are gone, says Jim Tyler, global head of sales engineering at Portware.

Continuous loop

The execution management system (EMS) still has to process large volumes of trading data but now it has to store it, too. For one thing, there are regulatory reasons such as MiFID II, which obligates firms to be able to reconstruct trades and explain the logic that drove their decisions. Secondly, there are alpha-driven reasons. Investors want big data to improve their performance.

“Rather than making that a manual process, firms are looking to AI [artificial intelligence] and predictive analytics and creating a continuous loop of data – post-trade data used to make pre-trade decisions,” says Tyler.

Given the volume and breadth of data involved in this continuous loop, managers must understand which aspects of the data are most important. An EMS is still built primarily for speed and low latency, so how a data warehouse is implemented – and what use is made of external data centres or cloud services – is important, says Tyler.

A number of data management specialists are also being employed by managers who are looking to address their whole data management strategy and see a modern data warehouse as part of that plan, says Steve Taylor, chief technology officer at Eagle Investment Systems.

“Some are going through legacy system replacement; there are a lot of fragmented, disparate systems and they need more transparency and mobility around their data,” he adds. Only when they try to bring all of that data together do they realise that the monolithic data warehouses they used to build in-house are no longer fit for purpose.

“When you build your own data warehouse, you lose sight of some of the business issues, like workflow, data scrubbing and data mobility,” says Taylor.

Many investment managers are often good at completing projects but not so good at providing ongoing services – and a data warehouse is now a service, says Taylor. “It needs continuous investment to keep the platform up to date and to ensure new architectural patterns can complement the more robust capabilities of a data warehouse to provide new views of data.”

Many investment managers are often good at completing projects but not so good at providing ongoing services – and a data warehouse is now a service, says Taylor. “It needs continuous investment to keep the platform up to date and to ensure new architectural patterns can complement the more robust capabilities of a data warehouse to provide new views of data.”

And while a data warehouse is well suited to new technology such as AI, machine learning and cloud services, it requires discipline, governance and board-level involvement, says Taylor.

“For example, is not just about dropping apps into the cloud but about rearranging your infrastructure and using the cloud as a vehicle to reimagine the data strategy.”

The digitally enhanced data warehouse that is ‘cloud-native’ and allows firms to aggregate data from multiple sources, including markets, client data and social media, is only possible if structured and unstructured data can be married together, says Mark Trousdale of digital investment management platform provider InvestCloud.

In terms of system architecture, a dedicated hub-and-spoke approach is likely to produce the best results, as opposed to adding data storage capability on to an existing portfolio or accounting system, or a system for managing client relationships, says Trousdale. “You need a unified structural approach with a unified interface. Having a single version of the integrated truth is absolutely crucial and beneficial.”

According to Trousdale, there is an answer to the moral hazard created by more efficient data warehouses and the concern that limitless storage and powerful analytics will just lead to more data binges from asset managers.

The most powerful constraint for managers and their data consumption is time, he says. “Investors are increasingly impatient when it comes to the time they have to wait for their financial services. That will be the deciding factor in the data dialectic.”

©2017 funds europe